AI that moves money — safely, explainably, in production.

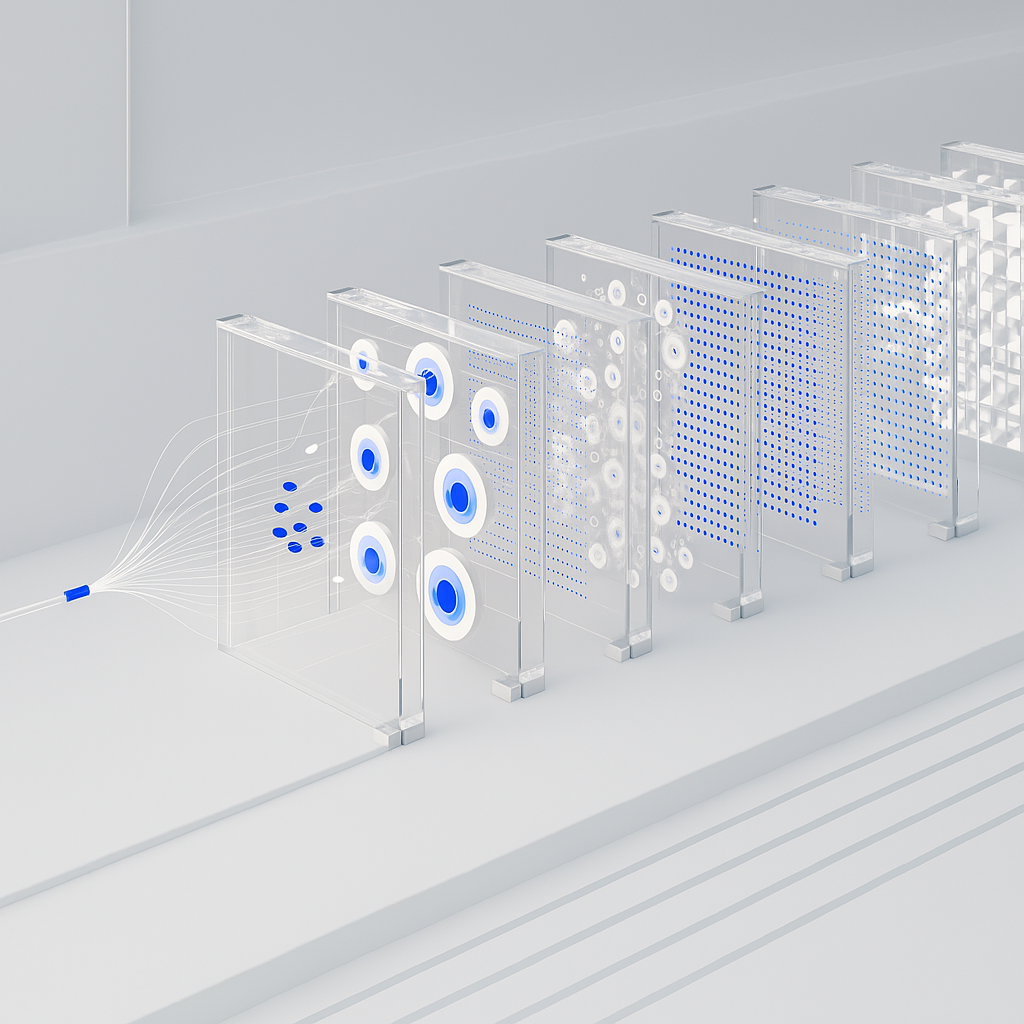

We embed AI-native engineers into banks and fintechs to ship copilots for fraud, AML, KYC, lending, and relationship managers — the kind your risk, compliance, and InfoSec teams will actually sign off on.

From instant onboarding and real-time fraud to predictive lending, M&A data intelligence, and embedded-finance rails — architected for zero-trust, MRM, and audit from day one.

Trusted by teams at

The mandate

Turn regulated banking AI from a PowerPoint into a release lane.

The hard part isn't the model — it's shipping it through MRM, InfoSec, second line, and legacy cores without losing a quarter. We design inside those constraints, wire into your private tenant, and make explainability a first-class deliverable, not a retrofit.

What you get

- Real-time fraud & AML triage copilots — grounded, logged, reviewable.

- KYC / onboarding acceleration with document AI and policy-grounded reasoning.

- Credit & lending copilots: predictive underwriting, covenant tracking, portfolio Q&A.

- RM / advisor assistants on your product, compliance, and CRM context.

- Core modernization: agents over legacy mainframes, cores, and data warehouses.

Why it works

Why this approach wins.

01 · Principle

Regulator-ready by construction

MRM artifacts — model card, intended-use, monitoring plan, limitations, change log — ship with the feature. Sign-off is a review, not a rebuild.

02 · Principle

Fraud and AML that think in seconds

Agents triage alerts, dedupe cases, draft SARs, and surface rationale. Analysts spend their day on judgment calls, not on copy-paste.

03 · Principle

Your data never leaves the perimeter

We deploy inside your VPC / tenant (Bedrock, Azure OpenAI, on-prem open-weights). Zero-trust, data residency, and PII redaction are table stakes.

Outcomes

The outcomes we commit to.

−60%

onboarding TAT

2×

fraud triage speed

100%

explainable decisions

0

data leaves tenant

Awards

Proud moments.

Pain points

Do you recognize your team?

What's happening

- Your regulator just asked about your AI governance.

- Fraud losses are growing faster than analyst headcount.

- Onboarding TAT is bleeding new-account conversion.

- A challenger bank shipped an advisor copilot your stack can't match.

- Lending teams are drowning in unstructured covenant & credit memo work.

How it feels

- Cautious — one bad AI decision ends careers here.

- Frustrated that every AI pilot dies in second line.

- Envious of neobanks shipping things you're still scoping.

- Protective of customer trust above all else.

- Tired of vendors whose claims evaporate under regulator scrutiny.

Where it hurts

- MRM cycles that take 9–12 months per use-case.

- Public LLM APIs blocked by InfoSec — no clear private path.

- No clean audit trail from model output to customer action.

- Silos between data science, risk, compliance, and the line of business.

- Legacy cores and data warehouses that AI tools can't reach.

What we ship

Workstreams, real artifacts, measurable outcomes.

Every engagement decomposes into clear workstreams you can ship and measure. Here's the playbook for this segment.

01

Fraud & AML copilot

- Queue integration

- Retrieval + rules layer

- Decision log

- Second-line review UX

02

KYC & onboarding AI

- Doc extraction

- Policy-grounded checks

- Exception workflow

- Audit trail

03

Lending & credit copilots

- Credit memo agent

- Covenant monitor

- Portfolio Q&A

- Risk dashboards

04

RM / advisor assistant

- Grounded RAG

- Compliance guardrails

- CRM + call-prep actions

- Supervisor views

05

Core & data modernization

- Integration layer

- Agent tools

- Data contracts

- Migration runway

As seen in

After-state

What changes on the other side.

AI ships quarterly across fraud, AML, KYC, lending, and advisor workflows — inside your tenant, with full MRM artifacts, audit trails, and explainability. Analysts work on judgment; agents carry the load. The regulator reads your dashboards, not your slides.

How it feels

What becomes possible

- 01Turn banking AI from an annual program into a quarterly release lane.

- 02Bring fraud and AML response to real-time without growing headcount linearly.

- 03Unlock legacy core and data assets as first-class fuel for agentic products.

Concerns, answered

The usual concerns — handled.

Concern 01

“Our regulator hasn't approved GenAI in customer workflows.”

We start where regulators are comfortable — internal analyst copilots — with MRM packs ready. Customer-facing scope expands as evidence accumulates.

Concern 02

“Public LLMs are blocked by InfoSec.”

We deploy to your VPC / private tenant: Bedrock, Azure OpenAI, Vertex, or open-weights on your hardware. No customer data ever leaves your perimeter.

Concern 03

“Our core is 30 years old — nothing will integrate.”

We've wired agents over mainframes, legacy cores, and decades-old warehouses. We bring integration patterns, not rip-and-replace plans.

Concern 04

“We already have a "GenAI platform" vendor.”

Good. We assess what they actually deliver against your MRM, grounding, and domain needs, and we layer — not thrash — on top of it.

Alternatives

Why us and not…

Big-4 GenAI practices

Deck-rich, deploy-poor. You pay for slides; we hand you production systems with MRM packs attached.

Horizontal LLM platforms

Strong tooling, weak banking grounding. We bring the BFSI muscle: fraud, AML, KYC, lending, MRM.

Neobank-style in-house squads

Fast but lean on governance. We bring the regulated-environment discipline without killing velocity.

Case studies

Where ideas become impact.

Behind every system we ship is a team that moved from uncertainty to measurable outcomes. A few recent ones.

Case 01 · Client

Wealth Management Company

Objective

The goal was to integrate AI tools into everyday work across all roles and increase overall productivity.

Results

85%

of employees use AI tools daily in workflows

70%

of routine queries resolved via GPT assistant within the first 2 weeks

5 min

Average response time reduced from 1 hour to 5 minutes

52

ready-to-use prompts created for key scenarios (finance, presale, legal, HR)

12

AI agents deployed for quality, sales, finance, and executive dashboards

100%

prompts reviewed for data security compliance

Stack

ChatGPT Enterprise, n8n, Cursor, RAGDB (vector database), Power BI + Bloomberg GPT, Miro, Whisper / Coqui

Case 02 · Client

E-Commerce Platform

Objective

Automate customer support and optimize product recommendation systems using AI.

Results

60%

reduction in customer support tickets

3x

increase in product recommendation conversion rate

24/7

Automated support coverage with AI chatbot

8

custom AI workflows deployed across departments

40%

faster content generation for marketing campaigns

95%

customer satisfaction score with AI-assisted support

Stack

Anthropic API, LangChain, Pinecone, Next.js, Vercel, PostgreSQL, Redis, NanoClaw

Founder & team

Senior humans,

AI-native craft.

100+

people trained

20+

companies transformed

9.4/10

avg. workshop rating

96%

AI adoption in 7 days

Talk to the founder

Mike Doroshenko

Product strategist and AI consultant with 10+ years of digital product strategy and AI transformation. Author of corporate training programs used by leading companies.

Supported by 30+ experts

from McKinsey, Google, and top tech companies.

Testimonials

Our clients said it best.

Patrik Dvořák

CEO, SECTOR 31 s.r.o.y

“Vahue's responsiveness and accuracy were impressive. We highly recommend them”

Philipp Lenz

Co-Founder, parloo.de

“There are a lot of companies that offer similar services but we've had an end-to-end good experience with them.”

Patrik Dvořák

CEO, SECTOR 31 s.r.o.y

“Vahue's responsiveness and accuracy were impressive. We highly recommend them”

Jacob Berg

CTO at Social Curator

“I appreciated the level of comfort Vahue made us feel. It was like being a part of a family.”

Georg Winkler

CEO, Xpertify

“The different and very profound skillset of the Vahue team was very impressive.”

Prasanna Elvis Eswara

Principal Consultant, Roost Digital

“They were proactive and seemed eager to build a relationship.”

Jacob Berg

CTO at Social Curator

“I appreciated the level of comfort Vahue made us feel. It was like being a part of a family.”

Georg Winkler

CEO, Xpertify

“The different and very profound skillset of the Vahue team was very impressive.”

Prasanna Elvis Eswara

Principal Consultant, Roost Digital

“They were proactive and seemed eager to build a relationship.”

Bartek Czerwinski

CTO, Quik

“Vahue has the ability to dive in and get the work done creatively with a lot of personal input.”

Steinar Aas

CEO & Co-Founder at Asio AS

“Their flexibility and genuine interest in finding the best solution for the product was impressive.”

Georg Winkler

CEO, Xpertify

“The different and very profound skillset of the team was very impressive.”

Bartek Czerwinski

CTO, Quik

“Vahue has the ability to dive in and get the work done creatively with a lot of personal input.”

Steinar Aas

CEO & Co-Founder at Asio AS

“Their flexibility and genuine interest in finding the best solution for the product was impressive.”

Blog

Perspectives that matter.

Deploying LLMs Securely in Enterprise Environments

A practical guide to integrating large language models with sensitive business data while staying compliant and secure.

Evaluating Code Data Sources for Training Large Language Models

A practical comparison of the major code dataset sources — from open-source repos to dedicated coding teams — and how to choose the right one.

The Case for Human-Written Code in LLM Training

Why human-authored code remains essential for building reliable coding assistants — and where synthetic data falls short.

Contact

We're here to deliver

Tell us where you are and what you're trying to ship. We reply within 24 hours with a diagnosis, a shortlist of quick wins, and the smallest next step we'd recommend.

Get more ROI from AI. Get Vahue.